About Core Memory

Core memory

Before cheap transistor memories came along, there was magnetic memory. This was not the first type of memory, but the first that could be reliably mass-produced. It was based on magnetising tiny bits of ferromagnetic material. The bits were formed like tiny rings: magnetising in one direction represented a "0", in the other direction it represented a "1":

The ferromagnetic material used was some form of "ferrite", a ceramic material. It was first used for the cores of high-frequency transformers, hence the word "core".

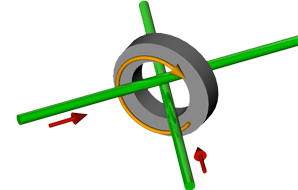

Cores flip from "0" to "1" and vice-versa by passing a current in a wire that goes through it:

|

|

|

| setting a bit to "1" | setting a bit to "0" | |

| the red arrow shows the direction of electric current, the orange arrow the direction of the magnetic field. | ||

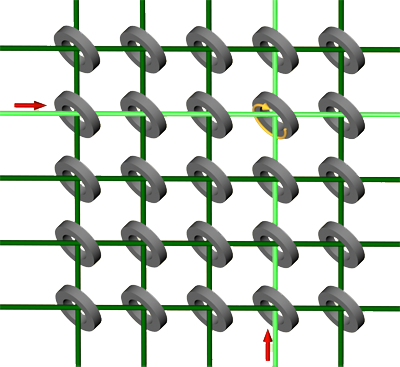

To make the bits addressable, the cores were placed at the crossing points of a grid of wires:

|

|

The trick is to use a current smaller than necessary to magnetise a core, but sufficiently large that twice that current does magnetise a core. Then only a core at the crossing point of two wires carrying current will get magnetised and all other cores remain unaffected.

The sense wire

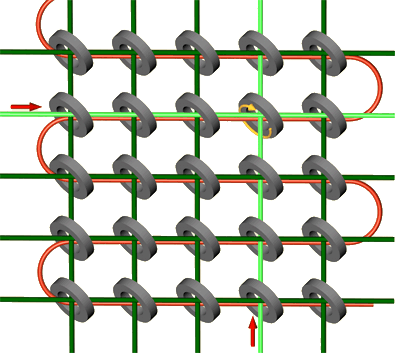

A extra, single wire runs in zig-zag fashion through all cores (red in drawing and photos):

|

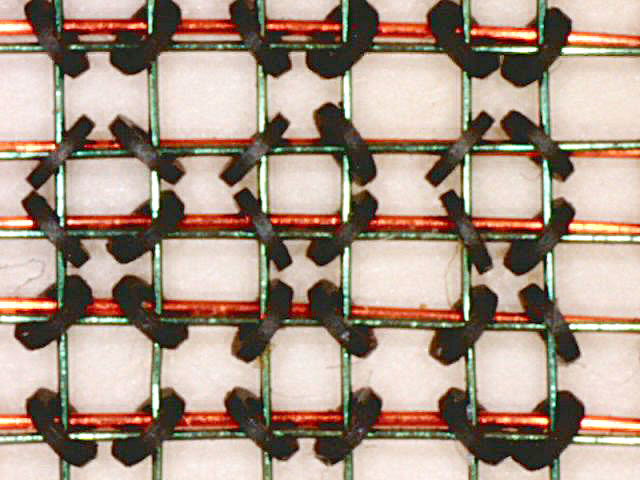

A photo of part of a 1968 IBM core memory. For manufacturing reasons the cores are not all aligned in the same way, but this is irrelevant.

To read the state of a certain core, you would pass currents to set it to "1" (say). If the core was already at "1", then nothing would happen, but if it was at "0" then its magnetisation would "flip". A third wire (red in the photo) runs through all the cores in a zig-zag fashion. If the core changes magnetisation, this wire, called the "sense wire" would give an electric pulse. No pulse: the core was at "1"; pulse: the core flipped and hence was at "0".

Unfortunately this way of reading the state destroys what was there and always leaves the core at "1". It was therefore necessary to set the core back to its original state after having read its value.

A typical speed was one microsecond for a memory access (read and reset or write).

In those days, memory was very expensive, there was little of it, and there were no graphical user interfaces. When anything went wrong with the programming, there was often no other solution than to inspect the state of the memory by printing it as a set of bits and figure out what had happened to the data and/or the program. Such a primitive listing of hexadecimal or octal numbers was obviously called a "core dump".